TPM 2.0 and Pluton: Hardware Root of Trust Explained

TL;DR: Building a reliable quantum computer requires roughly 1,000 fragile physical qubits per logical qubit due to surface code error correction overhead. New code families like LDPC and neutral-atom platforms are racing to slash that ratio, with some teams claiming it could drop to as few as 5-to-1.

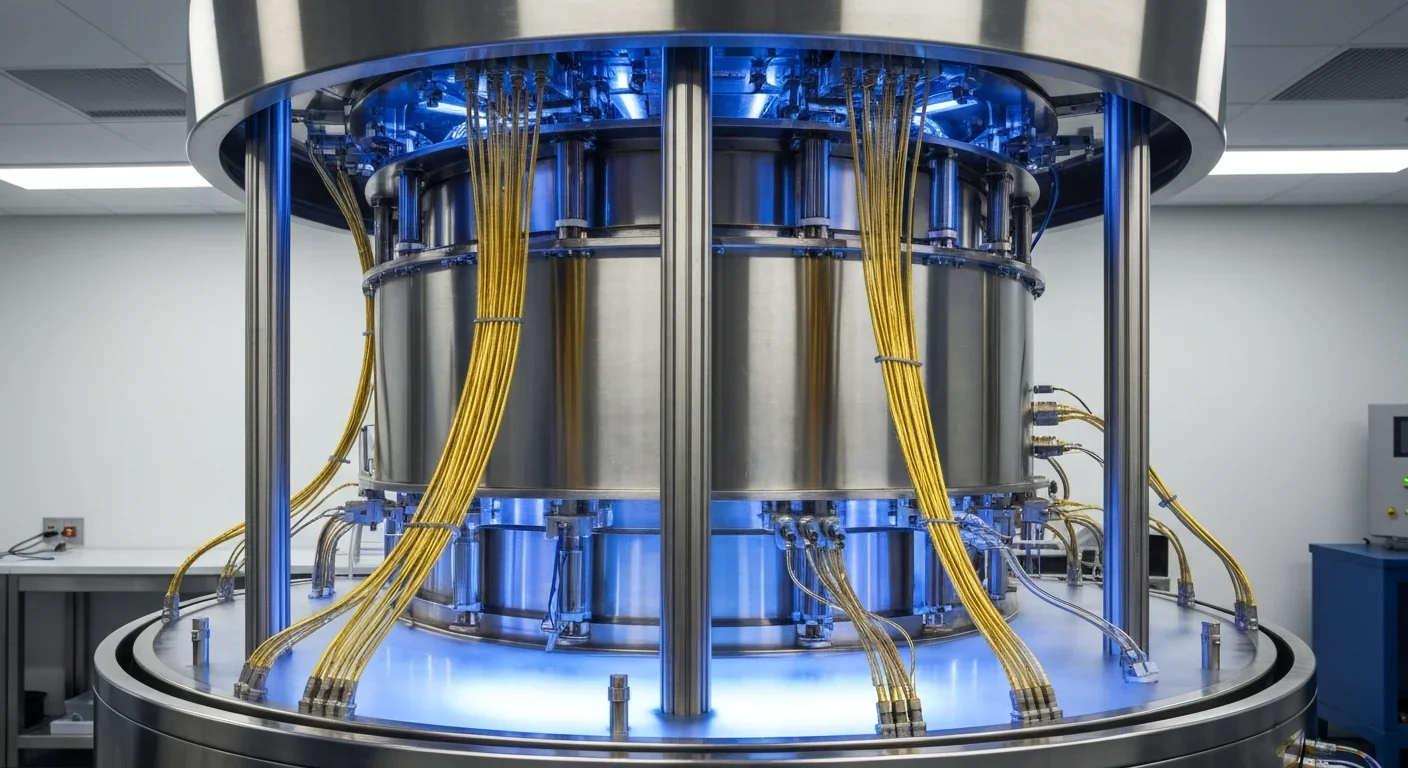

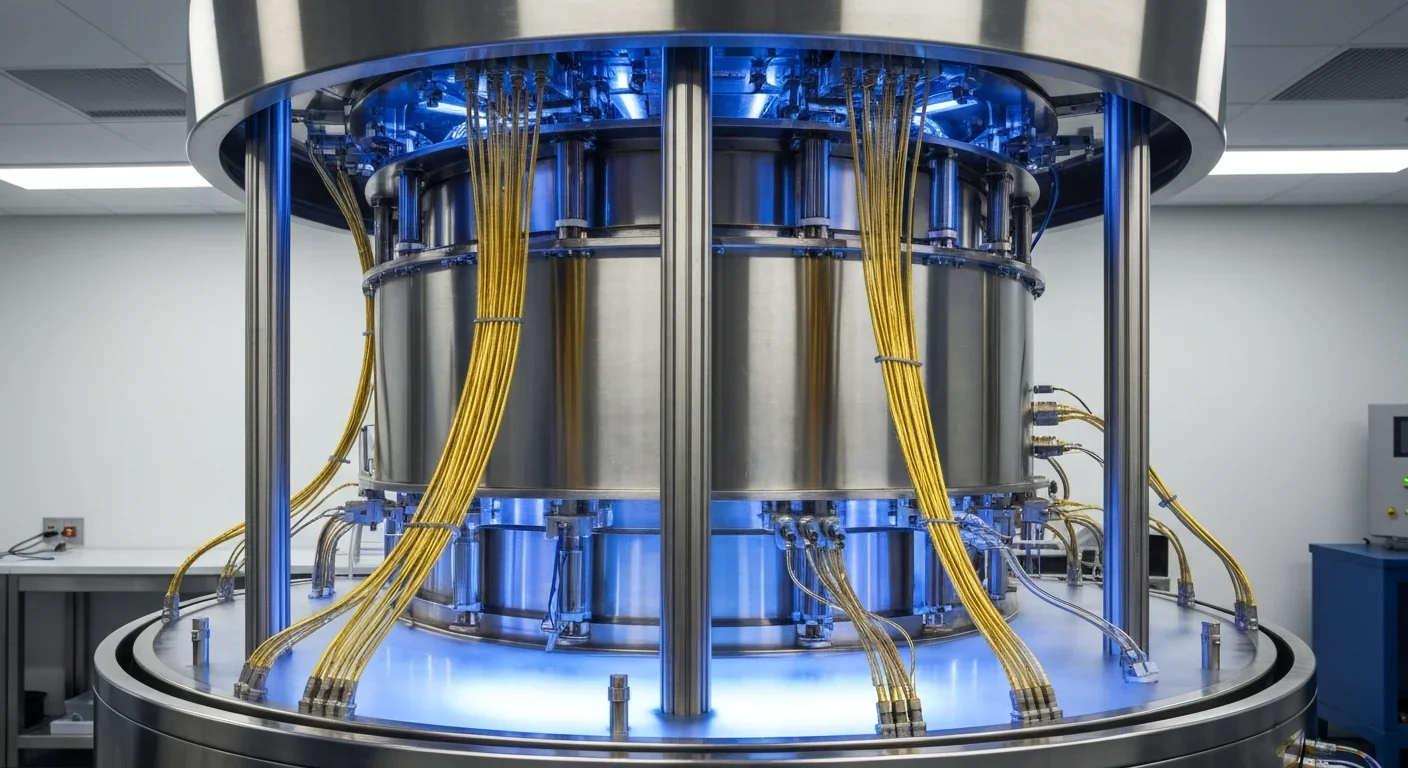

The next technological revolution won't be what you expect. It won't arrive as a sleek product launch or a viral app update. It will emerge from a refrigerator the size of a room, cooled to near absolute zero, where scientists are waging a quiet war against the most fundamental problem in quantum computing: their machines keep making mistakes. And fixing those mistakes requires a solution so resource-hungry it threatens to swallow the revolution whole.

Here's the core problem. A classical computer bit is robust. It's either a 0 or a 1, and it stays that way until you tell it otherwise. A quantum bit, or qubit, is a completely different animal. It exists in a superposition of states, holding both 0 and 1 simultaneously, which gives quantum computers their extraordinary power. But that same property makes qubits absurdly fragile.

Qubits fail in three main ways. They decohere, meaning their quantum state leaks away into the environment like heat escaping through a cracked window. They suffer gate errors, where the operations meant to manipulate them introduce tiny inaccuracies that compound over time. And they're destroyed by measurement, because in quantum mechanics, the act of observing a system changes it.

State-of-the-art qubits have error rates around 1 in 1,000 per operation. That might sound acceptable until you realize that useful quantum algorithms require billions of sequential gate operations. At a 0.1% error rate per gate, the probability of getting through a billion gates without a single error is effectively zero.

This is where quantum error correction enters the picture. The idea is straightforward in principle but staggering in practice: spread the information of one "logical" qubit across many imperfect "physical" qubits, so that errors can be detected and corrected before they corrupt the computation.

The key insight of quantum error correction is that you can measure whether an error occurred without measuring the state itself, preserving the superposition that gives quantum computers their power.

The challenge of protecting information from noise is ancient. When the telegraph was invented in the 1830s, engineers quickly discovered that electrical signals degraded over long distances. They built relay stations. When Shannon published his information theory in 1948, he proved that reliable communication was possible over noisy channels, as long as you added enough redundancy. Classical error correction became a solved problem. Your phone corrects thousands of bit errors every second without you noticing.

But quantum error correction is fundamentally harder, for a reason that would have puzzled Shannon. In classical computing, you can simply copy a bit to create redundancy. In quantum computing, you can't. The no-cloning theorem forbids copying an unknown quantum state. And you can't just check whether a qubit has the right value, because measurement destroys the superposition you're trying to protect.

So researchers had to invent something genuinely new. In the mid-1990s, three groups independently proved what's now called the threshold theorem: if the error rate per gate stays below a certain threshold, you can suppress logical errors to arbitrarily low levels by adding more physical qubits. The theorem was proven by Dorit Aharonov and Michael Ben-Or, by Emanuel Knill, Raymond Laflamme, and Wojciech Zurek, and by Alexei Kitaev. It was, in a sense, quantum computing's license to exist. The question shifted from "is fault-tolerant quantum computing possible?" to "how expensive will it be?"

The answer, it turns out, is very expensive.

The dominant approach to quantum error correction today is the surface code, a two-dimensional grid of qubits where data qubits alternate with measurement qubits in a checkerboard pattern. The measurement qubits continuously check for errors without revealing the actual quantum information, a trick that preserves the superposition while still catching mistakes.

The surface code is popular for a practical reason: it only requires interactions between neighboring qubits on a flat chip, something that superconducting processors can actually build. Its error threshold sits around 1% per gate operation, which is relatively forgiving compared to other codes. If your physical qubits can stay below that 1% error rate, the surface code can suppress logical errors exponentially as you increase the code's "distance," a measure of how many physical qubits you use.

But here's where the math gets painful. A distance-d surface code requires roughly 2d squared minus 1 physical qubits for a single logical qubit. To push logical error rates down to the 10 to the negative 12 level needed for useful algorithms, you need code distances in the range of 20 to 30. A distance-25 code requires about 1,250 physical qubits for one logical qubit. Factor in the overhead for magic state distillation, the process needed to perform certain essential gates that the surface code can't do natively, and the total balloons to roughly 1,000 to 10,000 physical qubits per logical qubit.

That's the 1,000-to-1 ratio that haunts the field.

"We need about 1,000 physical qubits per logical qubit for large-scale applications, but we are researching ways to bring that down to a few hundred."

- Michael Newman, Google Quantum AI

The surface code has a dirty secret. On its own, it can only perform a limited set of operations called Clifford gates. These gates are useful, but they're not enough for universal quantum computing. They can actually be efficiently simulated on a classical computer. To unlock the full power of a quantum machine, you need non-Clifford gates, specifically the T gate.

This is where magic state distillation comes in. You prepare special quantum states called "magic states" and inject them into your computation to enable T gates. The catch is that these magic states need to be extremely pure, and the process of purifying them from noisy inputs is itself resource-intensive. The original Bravyi-Kitaev protocol requires five noisy magic states to produce one cleaner one, and multiple rounds of distillation are typically needed.

Magic state distillation factories can consume 10 to 100 times more qubits than the logical code qubits themselves. In many realistic algorithm implementations, the distillation factories dominate the total qubit budget. As Dave Hayes of Quantinuum put it, "magic states are the keystone that give quantum computers their power."

Recent progress has been encouraging. Researchers at the University of Oxford achieved what's been called constant-overhead magic state distillation, proving that the scaling exponent can be brought to zero, meaning the ratio of input to output states doesn't need to grow as target error rates decrease. And in 2025, QuEra experimentally demonstrated magic state distillation on logical qubits using neutral-atom arrays, the first fault-tolerant implementation of a non-Clifford operation.

Magic state distillation factories can consume 10 to 100 times more qubits than the logical code qubits. In many realistic algorithms, the factories dominate the total qubit budget, not the error-correcting code itself.

The most significant experimental milestone came in 2024, when Google's Willow quantum chip demonstrated below-threshold surface code operation for the first time. Their distance-7 code, running on 101 qubits, achieved a logical error rate of 0.143% per cycle, and the logical qubit survived 2.4 times longer than even the best individual physical qubit in the array. Each step up in code distance, from 3 to 5 to 7, halved the error rate, demonstrating the exponential error suppression that the threshold theorem promised.

Google's team also integrated a real-time decoder that achieved an average latency of 63 microseconds, fast enough to keep up with the chip's rapid 1.1-microsecond cycle time across a million error-correction cycles. This was a breakthrough in its own right, because previous experiments had relied on slow, offline decoding.

But Willow also revealed a sobering reality. Rare correlated error events, possibly caused by cosmic rays, were observed roughly once every hour, corresponding to an error rate around 10 to the negative 10 per cycle. These bursts set a floor on logical error rates that deeper codes alone can't fix. They'll require new mitigation strategies, from physical shielding to higher-level error detection.

Google's Michael Newman noted that while the community expects about 1,000 physical qubits per logical qubit for large-scale applications, research is actively pursuing ways to bring that number down to a few hundred. Google's roadmap targets a million-qubit system by the end of the decade.

The 1,000-to-1 ratio isn't a law of physics. It's a consequence of working close to the error threshold with surface codes. Multiple alternative approaches are converging to slash it.

LDPC codes are generating the most excitement. Quantum low-density parity-check codes, particularly the bivariate bicycle family, can encode multiple logical qubits in a single code block, achieving 5 to 10 times fewer physical qubits than surface codes. IBM demonstrated a BB code that encodes 12 logical qubits in 144 data qubits plus 144 ancilla. IQM's Tile Codes promise greater than 10x physical qubit reduction using near-local connectivity. Photonic Inc. claims their SHYPS QLDPC codes could achieve up to 20 times fewer physical qubits per logical qubit.

The tradeoff is connectivity. Surface codes work with nearest-neighbor interactions on a flat chip. LDPC codes demand more complex wiring, which is harder to build but potentially transformative.

Dynamic surface codes from Google's research team show that you can match standard surface code performance using only three couplers per qubit instead of four, while their "walking circuit" reduces correlated errors from leakage by over an order of magnitude. This co-design of code and hardware could lower overhead without abandoning the surface code entirely.

Neutral-atom platforms may be the most disruptive wildcard. A Caltech-Oratomic team recently showed that dynamic shuttling of atoms with optical tweezers enables high-rate codes where each logical qubit could be encoded with as few as five physical qubits. Their results suggest a useful quantum computer could be built with just 10,000 to 20,000 qubits, not millions. The team has already built the largest qubit array ever assembled, containing 6,100 trapped neutral atoms.

Microsoft's 4D geometric codes take yet another approach, using four-dimensional rotations to achieve a fivefold reduction in physical qubits per logical qubit, with a "single-shot" property that allows errors to be corrected quickly without increasing circuit depth.

"Our results now make useful quantum computation with neutral atoms appear within reach by reducing qubit counts by up to two orders of magnitude."

- Madelyn Cain, Caltech-Oratomic Research Team

The quantum error correction race is genuinely global, and different regions are placing different bets. While Silicon Valley giants like Google focus on scaling superconducting chips, researchers in Europe are pursuing alternative architectures. IQM in Finland is developing Tile Codes with crystal-star topologies. In the UK, Riverlane has built FPGA-based decoders that process a full decoding round in under 1 microsecond, addressing the critical bottleneck of decoding speed.

The resource estimates for practical quantum algorithms are dropping fast. In 2019, factoring a 2048-bit RSA key was estimated to require 20 million physical qubits. By 2025, Craig Gidney at Google showed it could be done with fewer than one million noisy physical qubits. Then Iceberg Quantum's Pinnacle architecture, built on QLDPC codes, claimed it could be done with fewer than 100,000 physical qubits. That's a 200-fold reduction in seven years, driven almost entirely by algorithmic and code innovations rather than hardware improvements.

IBM's roadmap provides perhaps the most concrete near-term timeline. Their 2029 Starling system aims for approximately 200 logical qubits encoded via quantum error correction, using around 10,000 physical qubits. That's a ratio closer to 50-to-1, achieved through LDPC codes rather than surface codes. Their 2025 Kookaburra system will link three 1,386-qubit chips into a 4,158-qubit processor using chip-to-chip couplers.

Within the next decade, you'll likely see the first quantum computers that can run algorithms genuinely beyond the reach of any classical machine. The path there depends on solving the error correction overhead problem, and the field is attacking it from every angle simultaneously.

The honest picture is this: the 1,000-to-1 ratio is real for today's surface codes operating near threshold. But it's a moving target. As physical error rates improve from 0.1% toward 0.01%, the ratio could drop to 50-to-1 even with surface codes. New code families could push it lower still. And platform innovations like neutral-atom shuttling could fundamentally reshape what's possible.

The question isn't whether fault-tolerant quantum computing will arrive. The threshold theorem guarantees it's possible. The question is whether it arrives in 2030 or 2040, and whether it requires a machine the size of a data center or one the size of a server rack. Every qubit of overhead we eliminate brings that future closer. And right now, the engineers working on this problem are making faster progress than almost anyone predicted.

Saturn's moon Titan may harbour liquid water beneath its frozen crust, kept from freezing by ammonia acting as a natural antifreeze. New Cassini data suggests the interior could be slush with warm water pockets rather than a global ocean, and NASA's Dragonfly mission launching in 2028 aims to investigate whether this exotic environment could support life.

Bacteroides thetaiotaomicron uses 88 specialized gene clusters and over 260 enzymes to decode and digest dietary fibers humans can't break down, converting them into essential short-chain fatty acids. When fiber runs out, it eats your gut's protective mucus instead, with cascading health consequences.

Scientists are restoring Ice Age ecological dynamics through rewilding projects like Siberia's Pleistocene Park and de-extinction efforts by Colossal Biosciences. These initiatives aim to reintroduce megafauna or their proxies to repair broken ecosystems, protect Arctic permafrost, and slow climate change.

The cheerleader effect is a proven cognitive bias where people look more attractive in groups because the brain automatically averages faces, smoothing out individual flaws. Research shows the sweet spot is 3-5 people, it works for all genders, and it has real implications for dating apps and social media strategy.

The Hawaiian bobtail squid farms bioluminescent bacteria in a specialized light organ to erase its shadow in moonlit waters. This partnership, where bacteria reshape the squid's body and communicate through quorum sensing, is teaching scientists how host-microbe relationships work and inspiring new medical and biotech applications.

Millions are leaving social media platforms driven by privacy scandals, mental health concerns, and algorithmic manipulation. While 60% relapse within a week, those who stay away report dramatically improved wellbeing, and decentralized alternatives like Bluesky are surging.

Building a reliable quantum computer requires roughly 1,000 fragile physical qubits per logical qubit due to surface code error correction overhead. New code families like LDPC and neutral-atom platforms are racing to slash that ratio, with some teams claiming it could drop to as few as 5-to-1.