Why Quantum Computers Need 1,000 Qubits for One

TL;DR: P4, a domain-specific programming language, lets network engineers reprogram switch hardware to parse any protocol at terabit speeds. With hardware from Intel, AMD, and NVIDIA now supporting P4, programmable data planes are transforming everything from cloud networking to AI infrastructure.

The next technological revolution won't be what you expect. It won't arrive as a flashy consumer gadget or a viral app. It's happening inside the routers and switches that silently ferry trillions of data packets across the internet every second. A programming language called P4 is giving network engineers something they've never had before: the ability to rewrite how those packets are processed, on the fly, at speeds exceeding 12 terabits per second. And that shift is about to reshape everything from cloud computing to artificial intelligence.

For decades, the silicon chips inside network switches operated like factory assembly lines built for a single product. Engineers at companies like Broadcom designed application-specific integrated circuits, or ASICs, that could forward packets at blistering speeds but only understood a fixed set of protocols. If a new protocol emerged, or if a hyperscaler like Google needed custom packet handling, the only option was to wait years for a new chip generation. Broadcom's Tomahawk 4, for example, pushes 25.6 terabits per second of aggregate throughput, but it processes packets using logic that was literally etched into silicon during manufacturing.

This rigidity created a strange paradox. The software world was moving at lightning speed, with developers shipping code updates multiple times a day. But the network hardware underneath was frozen in time, locked into decisions made years before deployment. The emergence of software-defined networking, or SDN, in the late 2000s tried to fix this by separating the control plane from the data plane. OpenFlow, the first major SDN protocol, gave operators remote control over forwarding rules. But it still couldn't change how a switch parsed packets at the hardware level. OpenFlow could tell a switch where to send a packet, but it couldn't teach the switch to understand an entirely new packet format.

In 2014, a group of researchers at Stanford University led by Nick McKeown and Jennifer Rexford published a paper that proposed something radical. What if network switches could be reprogrammed the same way you'd update software on a laptop? Their answer was P4, short for Programming Protocol-Independent Packet Processors. The language was designed from the ground up with three core goals: target independence, protocol independence, and reconfigurability.

P4 is a domain-specific language that lets developers describe exactly how a switch should parse, match, and forward packets, without assuming anything about what protocols those packets use. Unlike general-purpose languages like C or Python, P4 has no built-in knowledge of common protocols like IP, TCP, or Ethernet. Instead, programmers define the header formats and field names themselves. The switch then slices up incoming packets according to these custom-defined schemas, processes them through a pipeline of match-action tables, and sends them on their way.

P4 doesn't just program switches differently. It inverts the entire relationship between hardware and software. Instead of software adapting to what the hardware supports, the hardware adapts to whatever the software defines.

Think of it like the difference between a calculator that can only add and subtract and a programmable computer that can run any application you write for it. The calculator is faster at its specific task, but the computer can adapt to anything.

The practical breakthrough came when Stanford spin-out Barefoot Networks built the first commercial P4-programmable ASIC, called Tofino. The chip could handle up to 6.5 terabits per second of throughput using a Protocol Independent Switch Architecture, or PISA. Its successor, Tofino 2, doubled that to 12.8 Tbps on a 7-nanometer process, supporting up to 128 ports of 100-gigabit Ethernet. These weren't laboratory curiosities. Facebook deployed Tofino-based switches to run SilkRoad, a Layer 4 load balancer capable of managing 10 million stateful flow tables at terabit throughput.

Intel acquired Barefoot Networks in June 2019 for an undisclosed sum, recognizing the strategic value of programmable data planes. But in a move that sent shockwaves through the industry, Intel halted production of the Tofino line in January 2023. The decision raised urgent questions about the future of P4 hardware. Would the ecosystem collapse around a single vendor's decision?

The answer, it turned out, was no. The P4 language was designed to be target-independent, meaning programs written in P4 can be compiled for entirely different hardware targets. AMD's Pensando Elba DPU is fully P4 programmable and optimized for dual 200-gigabit line-rate processing. NVIDIA's BlueField-3 DPU delivers 400 gigabits per second of throughput while offering the computing equivalent of up to 300 CPU cores. And the upcoming BlueField-4 platform promises 800 Gbps with six times the compute power of its predecessor.

"Barefoot is a founding member of the Stratum project and has made significant contributions enabling high-throughput, fully-programmable networks with P4, P4Runtime and our Tofino and Tofino 2 family of high-performance Ethernet switch ASICs."

- Ed Doe, Vice President, Intel's Connectivity Group

Even Intel itself continued investing in P4 through its Infrastructure Processing Unit line, with an 800 Gbps IPU scheduled for 2025-2026. And vendors like Asterfusion continue to sell 2-6.4 Tbps Tofino-based switches, combining the ASIC with Marvell OcteonTX DPU cards to create hybrid architectures capable of line-rate forwarding and deep stateful inspection.

P4 isn't the only game in town for programmable networking. eBPF/XDP runs inside the Linux kernel, giving developers the ability to attach custom packet processing programs to network interfaces without kernel modifications. DPDK, the Data Plane Development Kit, bypasses the kernel entirely, giving user-space applications direct access to network hardware for high-throughput processing. And OpenFlow, the original SDN darling, still sees use in campus and enterprise environments.

So when should you use which? As network engineer Tom Herbert put it after an exhaustive comparison, P4 wins on raw performance because execution in dedicated hardware is always faster than code running on a host CPU. But DPDK wins on debuggability, since engineers can use familiar tools like GDB. And eBPF wins on ecosystem breadth, with deep integration into the Linux kernel and growing cloud-native adoption.

The technologies aren't mutually exclusive. The P4 compiler can actually generate eBPF bytecode, allowing a single P4 program to run on both a hardware ASIC and a Linux kernel. And vendors like iWave are building SmartNICs that fuse P4 pipelines with DPDK for hybrid architectures that eliminate the compromise between performance and programmability.

As for OpenFlow, research suggests P4 may eventually render it obsolete, since OpenFlow agents can be written on top of P4 but not the reverse.

The most exciting applications of P4 go far beyond basic packet forwarding. The P4 community developed In-band Network Telemetry, or INT, a specification that embeds telemetry metadata directly into packet headers as they traverse the network. Each P4-enabled switch along the path can append performance data like queue depth, latency, and congestion metrics. Research from the MM-INT project showed this approach can reduce telemetry probe overhead by 4x and data transfer by nearly 3x compared to traditional methods.

P4 switches aren't just forwarding packets anymore. They're running neural networks, performing encryption, and collecting real-time telemetry, all at wire speed, without moving a single byte to a CPU.

Researchers have also demonstrated that P4 switches can run quantized neural networks directly in the data plane. One project deployed a split early-exit CNN inside a P4 switch for DDoS detection, achieving a 99.91% F1-score while reducing control-plane overhead by over 80%. Security applications are equally compelling: P4 switches now perform DDoS mitigation, firewall enforcement, and even AES encryption at line rate using clever techniques like lookup-table precomputation and the recirculate-and-truncate method.

In the realm of time-sensitive networking, a DETERMINISTIC6G project demonstrated a fully software-based TSN switch using P4 and Linux kernel scheduling, dynamically re-prioritizing packets without any control-plane interaction.

The explosion of AI training and inference workloads is creating unprecedented demand for network bandwidth. GPU clusters running models with hundreds of billions of parameters need to shuffle massive amounts of data between nodes with minimal latency. This is where programmable DPUs become critical.

NVIDIA's BlueField-3 supports GPU-direct RDMA, enabling one system's GPU to directly access another system's GPU memory across the network. It delivers full line-rate IPsec and TLS encryption at 400 Gbps with minimal CPU overhead. The BlueField-4 DPU goes further, collapsing networking, PCIe switching, and hardware acceleration into a single chip. NVIDIA's Inference Context Memory Storage Platform uses four BlueField-4 DPUs, each connected to roughly 150 TB of NVMe storage, enabling AI models to access context memory across the network without interrupting GPU computation.

DPUs are emerging as the third pillar of data center computing alongside CPUs and GPUs. They enforce zero-trust security policies at the network edge, handle RDMA congestion control for AI training clusters, and provide the programmable east-west traffic management that AI workloads demand.

"The convergence of AI, ML, and intent-based networking is expected to unlock new use cases and revenue streams, particularly in areas such as predictive analytics, autonomous networks, and advanced cybersecurity."

- P4 Programmable Switch Market Research Report, 2033

P4 is not without its challenges. The learning curve is steep. Network teams accustomed to configuring switches through CLI commands must now think like programmers, learning a new domain-specific language with limited debugging tools. While DPDK engineers can reach for GDB, P4 developers face a less mature debugging ecosystem.

Hardware constraints also remain real. Programmable ASICs like Tofino trade buffer memory for programmability, which limits their use in edge deployments where deep packet buffers are essential. Complex operations like floating-point math and native encryption aren't natively supported, requiring creative workarounds that add latency.

The P4 Programmable Switch market is growing rapidly. North America accounted for 39% of market revenue in 2024, while the Asia Pacific region is experiencing the fastest growth at a projected CAGR of 32.1% through 2033. The ratification of the 800G Ethernet standard in 2024 is driving demand for next-generation programmable silicon, and modern ASICs can already process packets at rates exceeding 25.6 Tbps.

Open-source projects like Stratum, released in 2019 by the Open Networking Foundation, are creating lightweight network operating systems built around P4 and P4Runtime. The p4c compiler offers multiple backends, from BMv2 software simulation to eBPF kernel integration, lowering the barrier for teams that want to experiment before investing in hardware. White-box switches running P4 are significantly lowering total cost of ownership compared to proprietary alternatives.

Within the next decade, the distinction between "the network" and "the computer" will blur beyond recognition. P4-programmable switches are already performing machine learning inference, encrypting traffic, and providing real-time telemetry, all at wire speed. As the industry moves from 400G to 800G and eventually to 1.6 terabit Ethernet, the switches that carry that traffic will need to be as adaptable as the applications they serve.

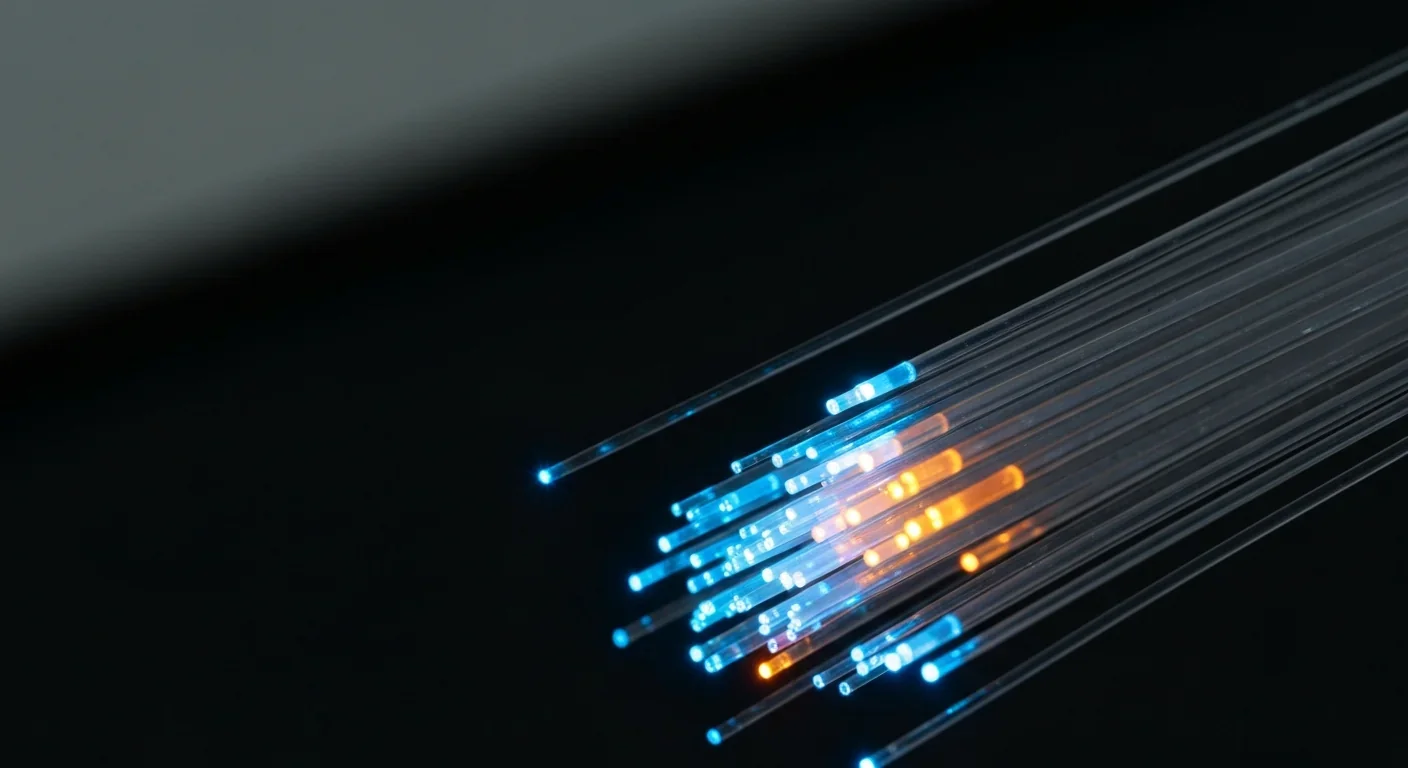

For network engineers and infrastructure architects, the message is clear: the era of configuring networks is giving way to the era of programming them. The teams that invest now in understanding P4, its toolchain, and its hardware ecosystem will be the ones building the networks that power the next generation of AI, cloud computing, and everything that depends on moving data at the speed of light.

The packets flowing through your network right now are processed by logic etched into silicon years ago. P4 is the technology that makes that logic programmable. And once you can program a network switch like you program a computer, the only limit is imagination.

Saturn's moon Titan may harbour liquid water beneath its frozen crust, kept from freezing by ammonia acting as a natural antifreeze. New Cassini data suggests the interior could be slush with warm water pockets rather than a global ocean, and NASA's Dragonfly mission launching in 2028 aims to investigate whether this exotic environment could support life.

Bacteroides thetaiotaomicron uses 88 specialized gene clusters and over 260 enzymes to decode and digest dietary fibers humans can't break down, converting them into essential short-chain fatty acids. When fiber runs out, it eats your gut's protective mucus instead, with cascading health consequences.

Scientists are restoring Ice Age ecological dynamics through rewilding projects like Siberia's Pleistocene Park and de-extinction efforts by Colossal Biosciences. These initiatives aim to reintroduce megafauna or their proxies to repair broken ecosystems, protect Arctic permafrost, and slow climate change.

The cheerleader effect is a proven cognitive bias where people look more attractive in groups because the brain automatically averages faces, smoothing out individual flaws. Research shows the sweet spot is 3-5 people, it works for all genders, and it has real implications for dating apps and social media strategy.

The Hawaiian bobtail squid farms bioluminescent bacteria in a specialized light organ to erase its shadow in moonlit waters. This partnership, where bacteria reshape the squid's body and communicate through quorum sensing, is teaching scientists how host-microbe relationships work and inspiring new medical and biotech applications.

Millions are leaving social media platforms driven by privacy scandals, mental health concerns, and algorithmic manipulation. While 60% relapse within a week, those who stay away report dramatically improved wellbeing, and decentralized alternatives like Bluesky are surging.

Building a reliable quantum computer requires roughly 1,000 fragile physical qubits per logical qubit due to surface code error correction overhead. New code families like LDPC and neutral-atom platforms are racing to slash that ratio, with some teams claiming it could drop to as few as 5-to-1.