Why Quantum Computers Need 1,000 Qubits for One

TL;DR: TPM 2.0 and Microsoft's Pluton processor establish hardware roots of trust that anchor zero-trust security by cryptographically verifying every boot component before the OS loads. Pluton advances this by integrating security directly into the CPU die, eliminating the vulnerable communication bus that attackers exploit to steal encryption keys.

Your security stack has a dirty secret. No matter how many firewalls you deploy, how sophisticated your endpoint detection gets, or how aggressively you patch your operating system, there's a layer underneath all of it that most organizations still leave dangerously exposed. It's the firmware. And attackers have figured this out.

In 2023, a UEFI bootkit called BlackLotus became the first malware in history to bypass Secure Boot on fully patched Windows 11 systems. It could disable BitLocker, Hypervisor-Protected Code Integrity, and Windows Defender before the operating system even started loading. Think about that for a moment. Every security tool your organization relies on, neutralized before it gets a chance to run.

This is why the conversation about zero-trust security has shifted dramatically from software to silicon. The real question isn't whether your firewall rules are tight enough. It's whether your hardware can be trusted at all.

Here's the fundamental issue: software can lie. A compromised operating system can report that everything is fine while malware operates underneath it, invisible to every detection tool running above the firmware layer. This isn't theoretical. The LoJax rootkit, discovered in 2018 and attributed to APT28 (Fancy Bear), modified UEFI firmware to persist through operating system reinstalls and even hard drive replacements. It was the first UEFI rootkit found in the wild, and it proved that nation-state attackers were already operating below the OS.

The Bootkitty proof-of-concept extended this threat to Linux, hooking into UEFI and GRUB authentication protocols to force successful signature checks regardless of what code was actually running. These aren't edge cases. They represent a growing category of firmware-level attacks that traditional antivirus and EDR solutions simply cannot see.

Firmware-level malware like BlackLotus and LoJax operates below the operating system, making it invisible to every traditional security tool. These attacks persist through OS reinstalls, hard drive replacements, and full system resets.

The core problem is that software-only security has no way to verify its own foundation. If the firmware is compromised, every measurement, every check, every verification the OS performs is potentially tainted. You need something that sits below all of that, something immutable that can't be altered by malicious code. You need a hardware root of trust.

A hardware root of trust is a dedicated, tamper-resistant security component that provides cryptographic operations inside physically protected silicon rather than in general-purpose memory where they could be exposed. The concept is straightforward: if you can't trust the software to tell you the truth about itself, you build a hardware witness that records what actually happened during boot.

The Trusted Platform Module (TPM), standardized as ISO/IEC 11889, is the most widely deployed implementation of this idea. TPM 2.0 is now mandatory for Windows 11, which means virtually every new PC shipped since 2021 includes one. But what does it actually do?

At its core, the TPM provides three critical functions. First, it generates and stores cryptographic keys inside tamper-resistant hardware, so even malware with administrator privileges can't easily extract them. Second, it contains Platform Configuration Registers (PCRs) that record cryptographic measurements of every component that loads during boot. Third, it enables remote attestation, so a server can cryptographically verify what code a device actually ran before granting network access.

The magic of TPM-based security happens during a process called measured boot. Here's how it works step by step.

When you power on a computer, the very first code that runs is the UEFI firmware. This firmware measures itself by computing a cryptographic hash of its own code and extending that hash into PCRs 0 through 7 inside the TPM. The key word here is "extending." A PCR extend operation is a one-way hash function: PCR_new equals HASH(PCR_old plus new_hash). You can only add to it, never overwrite it. This means the final value in any PCR reflects the complete, ordered history of everything that was measured into it.

Next, the firmware measures the bootloader and extends that hash into PCRs 8 through 15. The bootloader then measures the OS kernel. Within the kernel, the Integrity Measurement Architecture (IMA) continues measuring drivers and applications, extending those into PCR 10. Each link in the chain measures the next before allowing it to execute.

The result is an incorruptible ledger inside the TPM that records exactly what code ran on the system. If an attacker modifies any component, even a single byte, the PCR values change, and the system's attestation fails.

This is where TPM measurements connect directly to zero-trust architecture. In a zero-trust model, no device is trusted by default. Every time a device requests access to corporate resources, it must prove its health.

Remote attestation works like this: a verification server sends a random nonce to the device. The TPM generates a Quote, which is a signed package containing the current PCR values and the nonce, using its Attestation Identity Key (AK). The verifier checks the signature against the TPM's certificate chain, confirms the nonce matches to prevent replay attacks, and compares the PCR values against a known-good baseline.

If anything is off, access is denied. Microsoft's Intune enrollment attestation uses exactly this mechanism to enforce device-based conditional access policies. Azure Attestation takes it further, evaluating compliance posture against organizational policies before allowing connections to cloud services. Edge computing platforms like ZEDEDA enforce TPM attestation so aggressively that a failed attestation automatically locks the device, preventing it from unsealing its encrypted disk.

"Secure Boot prevents unsigned code from running; TPM records what actually ran for later verification. Together they form the cryptographic backbone of zero-trust device health."

- Professor Messer, CompTIA Security+ Boot Integrity

The NIST SP 800-207 framework and the Department of Defense's Zero Trust Overlays both explicitly require hardware-rooted attestation as a foundation for zero-trust deployments. This isn't optional anymore. It's policy.

So TPM 2.0 sounds pretty solid, right? There's a catch. A serious one.

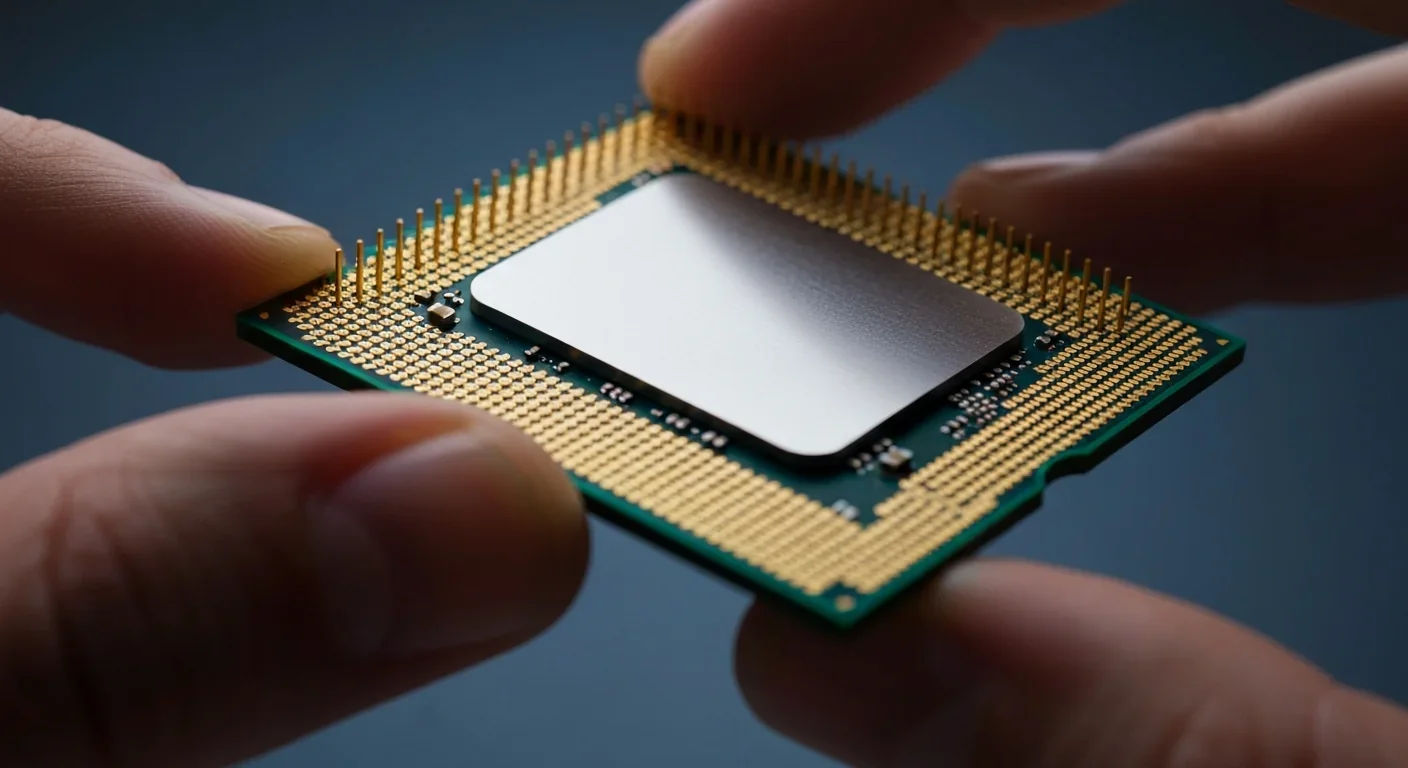

Traditional discrete TPMs sit on the motherboard as separate chips, connected to the CPU via an LPC or SPI bus. This bus is a physical trace on the circuit board, and it transmits data in plaintext. In 2021, the Dolos Group demonstrated that they could read a laptop's full disk encryption key by sniffing traffic between the TPM chip and the CPU. The key was transmitted unencrypted over an unprotected bus.

This isn't an isolated finding. A 2026 CVE documented a TPM-sniffing attack on the Moxa UC-1222A, where the full LUKS decryption key was released in plaintext during boot via a TPM2_NV_Read operation over the SPI interface. Researchers have even shown that PIN-protected BitLocker can be defeated by intercepting the SPI bus before the user enters their PIN, since the TPM's Unseal operation already exchanges key material over the wire.

The hardware that's supposed to be your root of trust is spilling secrets across an unprotected bus. No soldering required, just a logic analyzer and some patience.

Firmware TPMs (fTPM), like Intel PTT and AMD fTPM, eliminate the bus sniffing problem by running TPM functionality inside the CPU's trusted execution environment. But they introduce their own concerns, including vulnerability to timing side-channel attacks like TPM-FAIL, which recovered private ECDSA keys by measuring cryptographic operation durations.

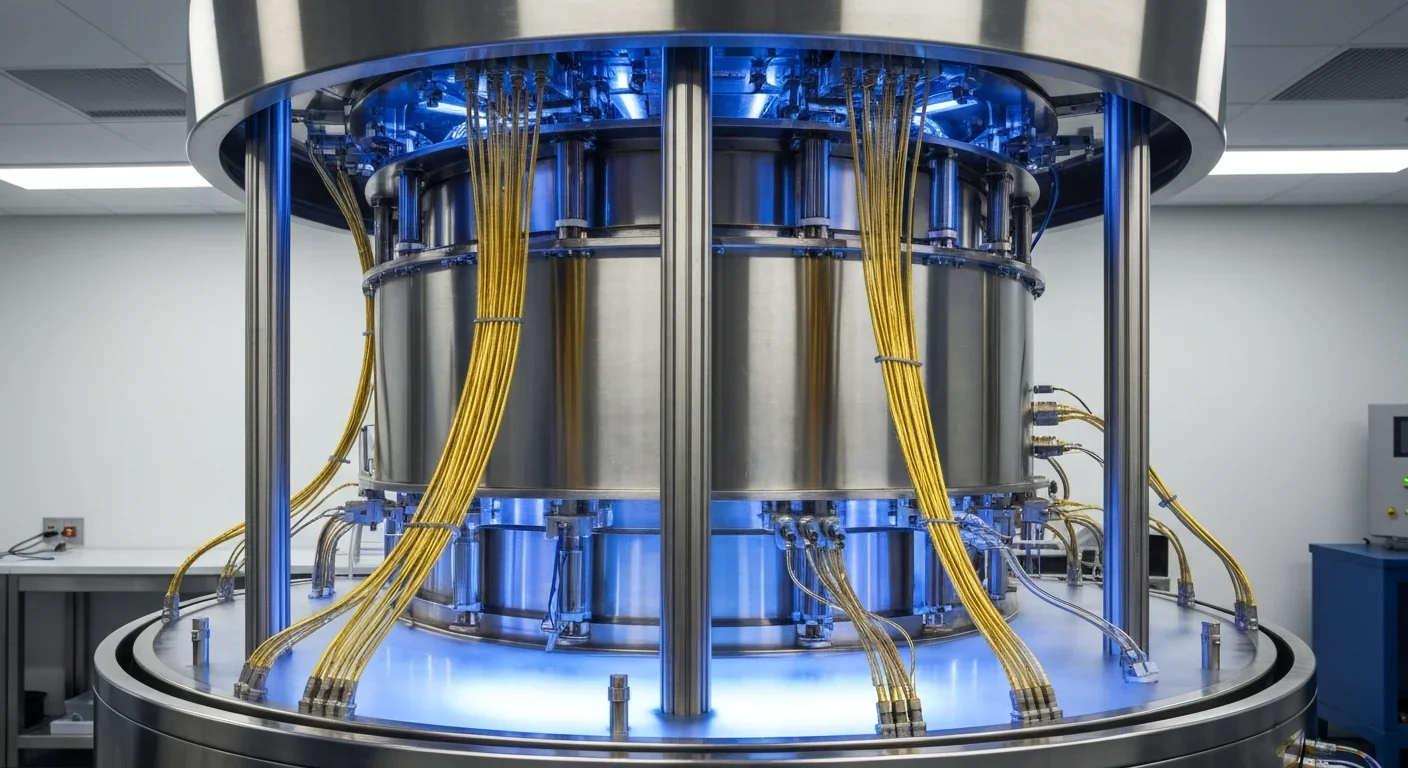

This is the problem Microsoft Pluton was designed to solve. Announced in November 2020, Pluton is a security processor integrated directly into the CPU die itself, not sitting beside it on the motherboard, not connected by a bus, but physically part of the same silicon.

Pluton is described by Microsoft as a "chip-to-cloud security technology built with Zero Trust principles at the core." It provides the same TPM 2.0 functionality, including BitLocker, Windows Hello, and System Guard, but eliminates the communication bus entirely. There's no LPC trace to tap, no SPI bus to sniff. The secrets never leave the CPU die.

But Pluton does something else that's arguably just as important: it delivers firmware updates through Windows Update. Traditional TPMs depend on OEM BIOS updates for security patches, and anyone who has managed enterprise firmware updates knows that process can take months, or simply never happen for older devices. Microsoft's Secure Boot certificates from 2011 are expiring in June 2026, and millions of PCs that can't run Windows 11 will never receive updated certificates because they lack TPM 2.0 and can't get OEM firmware updates.

Pluton breaks this cycle. Security patches for the root of trust itself arrive through the same channel as monthly Windows updates. No OEM dependency, no BIOS update hassle.

"Microsoft Pluton is designed to provide the functionality of the Trusted Platform Module thereby establishing the silicon root of trust."

- Microsoft Learn, Pluton Security Processor Documentation

Pluton is currently available on devices powered by AMD Ryzen 6000, 7000, 8000, 9000, and Ryzen AI Series processors; Intel Core Ultra 200V, Ultra Series 3 and Series 3 processors; and Qualcomm Snapdragon 8cx Gen 3 and Snapdragon X Series processors. That covers a wide swath of modern laptops and desktops, though enterprise fleet turnover means many organizations are still running discrete TPM hardware.

Interestingly, Pluton can be deployed alongside a discrete TPM, giving OEMs flexibility. A manufacturer might use the discrete TPM as the default for backward compatibility while making Pluton available as a security processor for advanced use cases. This dual-mode approach eases the transition.

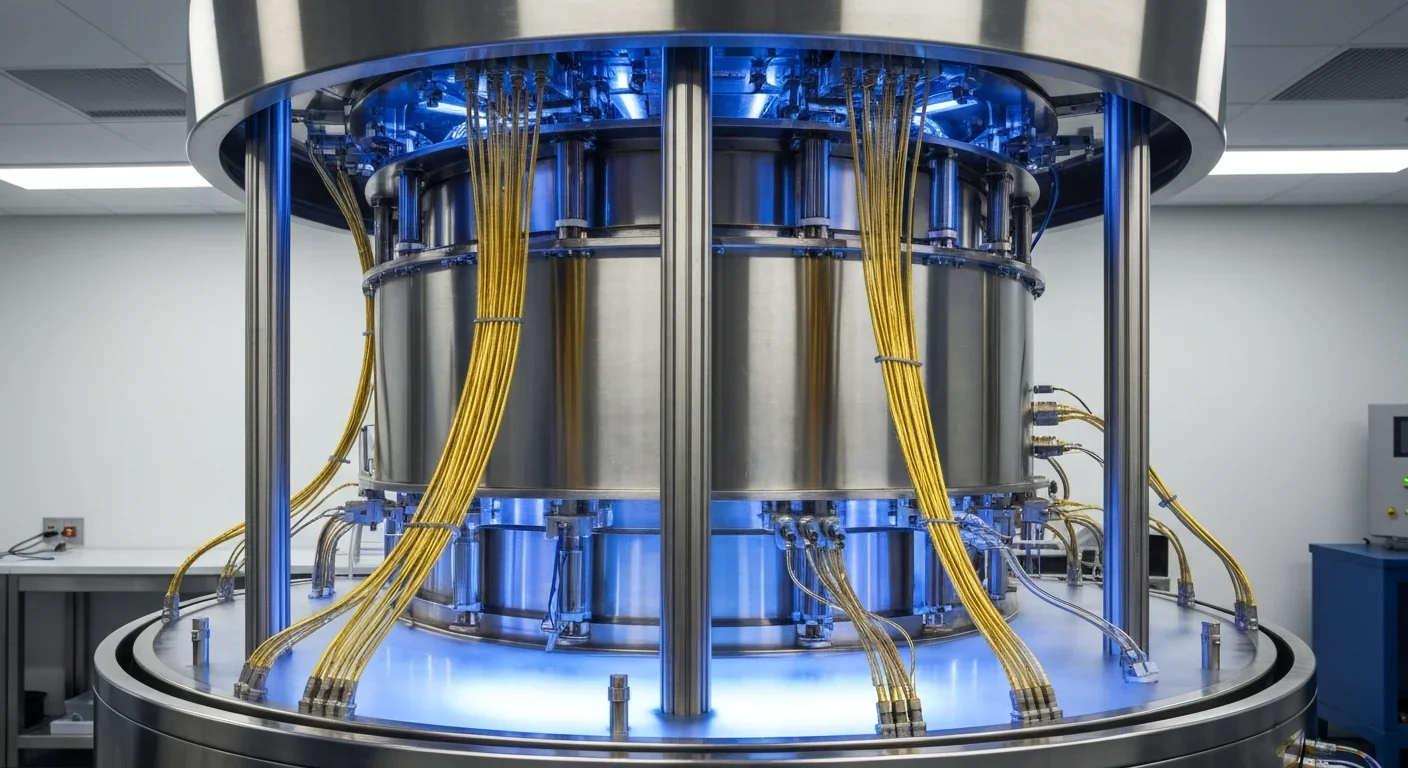

The broader TCG (Trusted Computing Group) has also been preparing for the future, integrating post-quantum cryptographic algorithms into the TPM 2.0 specification. As quantum computing threatens to break current asymmetric encryption, having quantum-resistant algorithms built into the hardware root of trust becomes critical.

The lesson from BlackLotus, LoJax, and the growing list of firmware-level attacks is clear: your security is only as strong as the hardware it's anchored to. A perfect software security stack built on compromised firmware is like a bank vault with a screen door.

For CISOs and security architects evaluating their posture, several practical takeaways emerge. First, audit your fleet's TPM status. Know which devices have discrete TPMs, firmware TPMs, or Pluton, and understand the specific attack surfaces each type presents. Second, implement remote attestation. If you're running a zero-trust architecture without hardware-based device health verification, you're doing zero-trust in name only. Third, plan your migration path to Pluton-equipped hardware, especially for roles handling sensitive data where physical access threats are realistic.

If you're running a zero-trust architecture without hardware-based device health verification, you're doing zero-trust in name only. Audit your fleet, implement remote attestation, and plan your migration to on-die security processors.

The shift from software-defined security to hardware-anchored trust isn't a trend. It's a correction. For decades, we built increasingly sophisticated security on top of firmware we couldn't verify. The attackers noticed. Now the industry is finally catching up, moving the most critical security primitives into the one place malware can't easily reach: the silicon itself.

Within the next few years, hardware attestation will become as routine as TLS certificates are today. The organizations that get ahead of this shift won't just be more secure. They'll be the only ones whose devices can prove it.

Saturn's moon Titan may harbour liquid water beneath its frozen crust, kept from freezing by ammonia acting as a natural antifreeze. New Cassini data suggests the interior could be slush with warm water pockets rather than a global ocean, and NASA's Dragonfly mission launching in 2028 aims to investigate whether this exotic environment could support life.

Bacteroides thetaiotaomicron uses 88 specialized gene clusters and over 260 enzymes to decode and digest dietary fibers humans can't break down, converting them into essential short-chain fatty acids. When fiber runs out, it eats your gut's protective mucus instead, with cascading health consequences.

Scientists are restoring Ice Age ecological dynamics through rewilding projects like Siberia's Pleistocene Park and de-extinction efforts by Colossal Biosciences. These initiatives aim to reintroduce megafauna or their proxies to repair broken ecosystems, protect Arctic permafrost, and slow climate change.

The cheerleader effect is a proven cognitive bias where people look more attractive in groups because the brain automatically averages faces, smoothing out individual flaws. Research shows the sweet spot is 3-5 people, it works for all genders, and it has real implications for dating apps and social media strategy.

The Hawaiian bobtail squid farms bioluminescent bacteria in a specialized light organ to erase its shadow in moonlit waters. This partnership, where bacteria reshape the squid's body and communicate through quorum sensing, is teaching scientists how host-microbe relationships work and inspiring new medical and biotech applications.

Millions are leaving social media platforms driven by privacy scandals, mental health concerns, and algorithmic manipulation. While 60% relapse within a week, those who stay away report dramatically improved wellbeing, and decentralized alternatives like Bluesky are surging.

Building a reliable quantum computer requires roughly 1,000 fragile physical qubits per logical qubit due to surface code error correction overhead. New code families like LDPC and neutral-atom platforms are racing to slash that ratio, with some teams claiming it could drop to as few as 5-to-1.