Why Quantum Computers Need 1,000 Qubits for One

TL;DR: Intel's hardware transactional memory extension TSX promised massive concurrency speedups but was killed by a decade of bugs and security vulnerabilities. ARM's alternative TME takes a safer architectural approach, but hasn't yet faced the same real-world scrutiny at scale.

The next technological revolution in computing won't come from faster clock speeds or bigger caches. It will come from solving a problem that has haunted software engineers for decades: how to let multiple processor cores safely share memory without grinding to a halt. Intel thought they had the answer in 2013 when they shipped Transactional Synchronization Extensions in their Haswell processors. They were catastrophically wrong, and their failure has reshaped how we think about the fundamental contract between hardware and software.

Imagine you're a database engineer in 2013. Your system handles thousands of concurrent transactions per second, and every one of them fights for the same locks. Traditional mutex-based synchronization forces threads to wait in line, serializing what should be parallel work. Then Intel announces TSX, a set of hardware transactional memory instructions built directly into silicon that promised to change everything.

The concept was elegantly simple. Instead of acquiring locks, threads would begin "transactions" that execute speculatively. If two threads touched the same memory, the hardware would detect the conflict and roll one back. If they didn't collide, both committed simultaneously with zero lock overhead. Benchmarks showed staggering results: up to 40% faster application execution and 4-5 times more database transactions per second. SAP HANA's Delta Storage reported a 4.6x speedup in index operations under high-contention workloads with 8 threads.

It sounded too good to be true. It was.

The history of Intel TSX reads like a tragedy in five acts, and understanding it requires looking at how processor design decisions from the early 2010s collided with security realities that nobody anticipated.

Intel shipped TSX with Haswell processors in June 2013. The feature comprised two mechanisms: Hardware Lock Elision (HLE), which transparently converted lock-based code into transactions, and Restricted Transactional Memory (RTM), which gave programmers explicit control over transactional regions. Both worked by buffering transactional writes in the processor's L1 data cache and using cache coherence protocols to detect conflicts at cache-line granularity, each just 64 bytes wide.

By August 2014, barely a year after launch, Intel announced a correctness bug affecting Haswell, Haswell-E, Haswell-EP, and early Broadwell CPUs. The fix was a microcode update that simply disabled TSX entirely. The Broadwell-Y variant had it even worse: the bug couldn't be fixed by microcode at all, so TSX was permanently disabled in those processors.

Intel tried again with Skylake processors, re-enabling TSX. But in October 2018, another memory ordering issue surfaced, forcing Intel to disable HLE via microcode and restrict RTM to use within SGX and SMM only.

Then came 2019, and everything got much worse.

The year 2019 marked the moment when TSX transformed from a buggy-but-promising feature into a genuine security liability. Researchers discovered that the very mechanism making TSX fast, speculative execution with observable abort timing, created a highway for side-channel attacks.

The TSX Asynchronous Abort vulnerability (CVE-2019-11135) revealed that when a transaction aborted, the processor didn't fully roll back all speculative execution state. Sensitive data from other processes could leak through precise timing measurements of these abort events. Attackers could literally watch how quickly transactions failed and use that information to reconstruct secrets held in protected memory.

The abort path in Intel TSX didn't fully roll back speculative execution state, turning a performance feature into a data leakage channel. This wasn't a bug that could be patched. It was a consequence of the design itself.

TSX wasn't just vulnerable in isolation. It became the preferred weapon for a whole class of attacks. ZombieLoad, RIDL, and Fallout all exploited microarchitectural data sampling, and TSX provided a particularly reliable attack vector because it created a large abort shadow buffer readable during the abort path. The Prime+Abort attack specifically targeted Intel TSX, using abort timings to reveal memory access patterns across security boundaries.

The fundamental problem was architectural. Transaction failures leak sensitive data through precise timing measurements, allowing attackers to infer memory access patterns. This wasn't a bug that could be patched. It was a consequence of the design itself.

In June 2021, Intel published microcode updates that disabled TSX across Skylake through Coffee Lake and Whiskey Lake processors as a TAA mitigation. By 2023, microcode updates had effectively disabled TSX across all Intel CPUs. Both HLE and RTM were deprecated, effectively removing TSX support from most consumer processors.

The feature that once promised a 4-5x performance boost was dead, and the mitigations for its vulnerabilities degraded CPU performance by up to 40% in some workloads.

"Performance optimization features often create security trade-offs."

- Security analysis from Undercode Testing on Intel TSX vulnerabilities

While Intel was fighting fires, ARM quietly introduced the Transactional Memory Extension (TME) as part of the ARMv9 architecture specification in 2019-2020. On paper, ARM TME shares the same goal as Intel TSX: hardware-accelerated atomic operations for concurrent programming. But the engineering philosophy behind it is fundamentally different.

ARM TME defines four instructions, TSTART, TCOMMIT, TCANCEL, and TTEST, to manage transactions. Where Intel's approach aggressively speculated memory accesses and maintained complex speculative buffers, ARM adopted a "best-effort" model where transactions can always abort. This sounds like a weakness, but it's actually a security-conscious design choice. By assuming transactions might fail at any time, ARM reduces the need for the kind of deep speculative state that gave attackers their opening in Intel's implementation.

The ARMv9 specification also makes TME optional. Chip designers can choose whether to include it, which means not every ARM processor carries the potential attack surface. Compare this to Intel's approach, where TSX was baked into entire processor families and had to be globally disabled when problems emerged.

ARM's memory safety story extends beyond TME into the Memory Tagging Extension (MTE). The AmpereOne processor, launched in 2024, became the first datacenter system-on-chip with ARM MTE support. Its implementation stores allocation tags in ECC bits of DRAM, eliminating the typical 3% memory capacity overhead. Apple's iPhone 17 and Google's Pixel 8 both use MTE's synchronous mode to detect buffer overflows and use-after-free attacks in production.

The Intel-versus-ARM narrative obscures an important data point. IBM POWER processors have offered hardware transactional memory since POWER8 in 2014, running continuously in production workloads without the catastrophic security failures that plagued TSX. IBM's approach, operating on a different microarchitecture with different speculative execution constraints, suggests that the problem wasn't hardware transactional memory as a concept. It was the specific way Intel implemented it within their speculative execution pipeline.

IBM's POWER line has maintained hardware transactional memory since 2014 without Intel's security catastrophes, suggesting the problem was Intel's implementation, not the concept itself.

This matters because it reframes the question. Instead of asking "is hardware transactional memory fundamentally broken?" we should ask "what implementation constraints make it safe?" The answer appears to involve careful management of speculative state, conservative abort semantics, and architectural designs that don't expose timing side-channels through transaction failures.

Meanwhile, the software world has adapted. Lock-free and wait-free algorithms using compare-and-swap operations remain the practical alternative for most concurrent workloads. Double compare-and-swap and other atomic primitives offer limited but predictable functionality. Software transactional memory implementations exist but have never achieved the performance that hardware approaches promised. The gap that TSX was supposed to fill remains open.

Here's where honest assessment demands skepticism. ARM TME is optional in the ARMv9 specification, meaning many implementations simply don't include it. While the specification is architecturally sound, the real test of any hardware concurrency feature is what happens when millions of devices run adversarial workloads against it for years.

Intel TSX looked great in controlled benchmarks too. The vulnerabilities didn't surface until researchers specifically probed the microarchitectural interactions between speculative execution and cache coherence. ARM TME hasn't faced that level of scrutiny yet, partly because it hasn't been deployed at anywhere near the same scale.

The conservative best-effort model does reduce the theoretical attack surface. Transactions that abort readily create smaller windows for timing-based observations. But "smaller window" isn't the same as "closed window." As the Meltdown and Spectre vulnerabilities demonstrated, even ARM-based processors aren't immune to speculative execution attacks. Any hardware feature that touches speculative state remains a potential target.

The honest conclusion is that ARM TME hasn't been proven safe. It has been proven different. Whether those differences are sufficient to avoid Intel's fate remains an open question that only time, scale, and adversarial research will answer.

"Hardware-level vulnerabilities require hardware-level solutions."

- Security researchers at Undercode Testing on CPU vulnerability mitigation

If you're building concurrent systems today, the practical implications are clear. Don't design architectures that depend on hardware transactional memory being available. The TSX saga proved that hardware features can disappear overnight through microcode updates, breaking assumptions that software depends on.

Treat hardware TM as an optimization hint, not a foundation. Write correct lock-based or lock-free code first, then layer hardware acceleration on top when available. This is exactly the pattern ARM's best-effort TME model encourages: your code must work when transactions abort, because they always might.

For the broader technology industry, Intel's TSX failure carries a lesson about the hardware-software contract. When hardware vendors promise new capabilities, software teams build on those promises. When the hardware breaks, the software breaks too. The more deeply embedded the dependency, the more catastrophic the failure.

The next generation of hardware concurrency features, whatever form they take, will need to be designed with security as a first-class constraint rather than an afterthought. Intel learned this lesson at enormous cost. ARM appears to have internalized it, at least architecturally. But the ultimate test isn't the specification. It's what happens when the specification meets reality at scale.

We're still waiting for that test.

Saturn's moon Titan may harbour liquid water beneath its frozen crust, kept from freezing by ammonia acting as a natural antifreeze. New Cassini data suggests the interior could be slush with warm water pockets rather than a global ocean, and NASA's Dragonfly mission launching in 2028 aims to investigate whether this exotic environment could support life.

Bacteroides thetaiotaomicron uses 88 specialized gene clusters and over 260 enzymes to decode and digest dietary fibers humans can't break down, converting them into essential short-chain fatty acids. When fiber runs out, it eats your gut's protective mucus instead, with cascading health consequences.

Scientists are restoring Ice Age ecological dynamics through rewilding projects like Siberia's Pleistocene Park and de-extinction efforts by Colossal Biosciences. These initiatives aim to reintroduce megafauna or their proxies to repair broken ecosystems, protect Arctic permafrost, and slow climate change.

The cheerleader effect is a proven cognitive bias where people look more attractive in groups because the brain automatically averages faces, smoothing out individual flaws. Research shows the sweet spot is 3-5 people, it works for all genders, and it has real implications for dating apps and social media strategy.

The Hawaiian bobtail squid farms bioluminescent bacteria in a specialized light organ to erase its shadow in moonlit waters. This partnership, where bacteria reshape the squid's body and communicate through quorum sensing, is teaching scientists how host-microbe relationships work and inspiring new medical and biotech applications.

Millions are leaving social media platforms driven by privacy scandals, mental health concerns, and algorithmic manipulation. While 60% relapse within a week, those who stay away report dramatically improved wellbeing, and decentralized alternatives like Bluesky are surging.

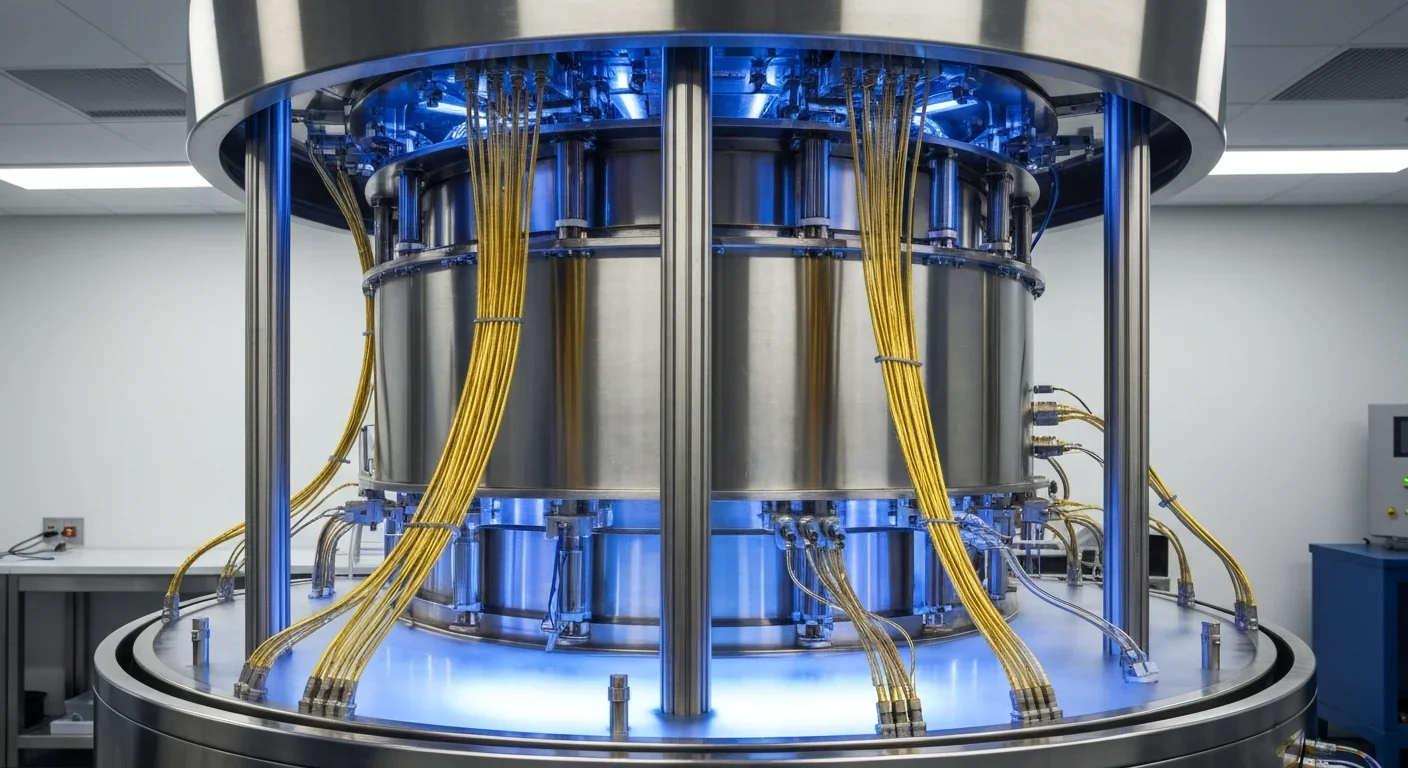

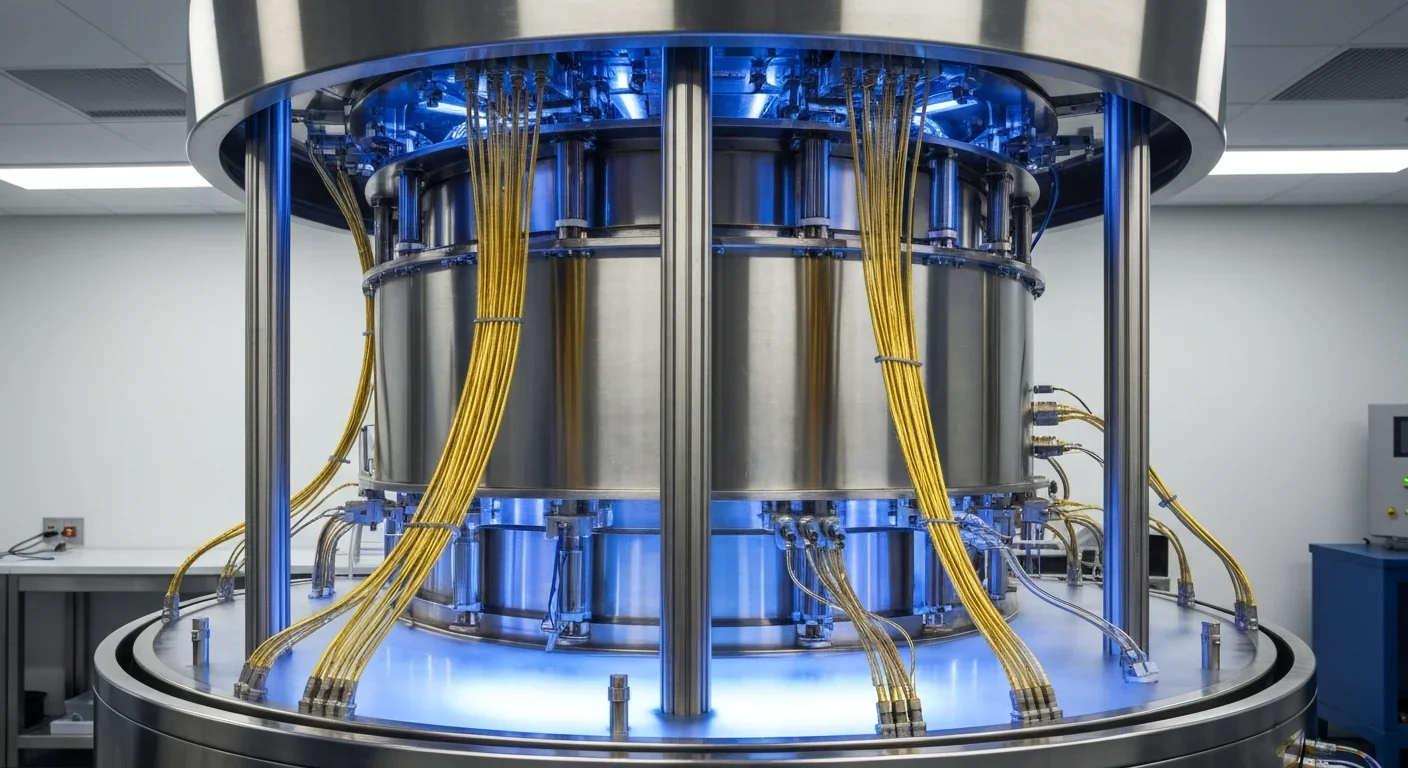

Building a reliable quantum computer requires roughly 1,000 fragile physical qubits per logical qubit due to surface code error correction overhead. New code families like LDPC and neutral-atom platforms are racing to slash that ratio, with some teams claiming it could drop to as few as 5-to-1.