Wafer-on-Wafer Bonding Breaks AI's Bandwidth Wall

TL;DR: Intel's Optane memory, built on 3D XPoint technology, delivered groundbreaking performance between DRAM and NAND flash but failed commercially due to high manufacturing costs and a production-cost trap. Discontinued in 2022, its legacy now shapes CXL and next-generation memory technologies.

The next technological revolution won't always be what you expect. Sometimes, the most brilliant engineering achievement of a generation doesn't fail because the science was wrong. It fails because the market wasn't ready to pay for it. Intel's Optane memory, built on a technology called 3D XPoint (pronounced "cross point"), was supposed to fundamentally reshape how computers handle data. It delivered on nearly every technical promise. And then Intel killed it anyway.

To understand why Optane mattered, you need to understand a problem that's haunted computing for decades. Every computer juggles two fundamentally different types of memory. There's DRAM, the fast, volatile working memory that loses everything when the power goes out. And there's NAND flash, the slower, persistent storage that keeps your files safe but takes roughly 1,000 times longer to access than DRAM.

That gap between fast-and-forgetful and slow-and-persistent has been one of computing's most stubborn bottlenecks. Every database query, every application launch, every virtual machine migration gets stuck waiting for data to shuttle between these two very different worlds.

In mid-2015, Intel and Micron jointly unveiled a new kind of non-volatile memory that promised to bridge this divide. 3D XPoint was up to 1,000 times faster than NAND flash while being ten times denser than conventional DRAM. It could remember data without power, like a hard drive, but access it at speeds approaching RAM. For the first time, a single technology could sit comfortably between these two tiers, blurring the line between memory and storage in ways that computer architects had been dreaming about for years.

3D XPoint promised the impossible: memory that was nearly as fast as DRAM, nearly as cheap as NAND, and could remember data without power. It delivered on the physics. The economics were another story entirely.

The tension between speed and persistence in computing memory isn't new. It goes all the way back to the earliest days of digital computing, when engineers first had to choose between magnetic core memory that could remember data and faster circuits that couldn't.

For most of computing history, the solution has been a hierarchy. Tiny amounts of blazing-fast cache sit closest to the processor. Then comes DRAM, measured in gigabytes, which serves as the main working space. Below that, NAND flash storage holds terabytes of data at much lower cost but with access times measured in microseconds rather than nanoseconds.

This layered approach works, but it creates a performance cliff. When an application needs data that isn't already loaded into DRAM, it has to wait for storage to deliver it. In data center environments, where databases and analytics platforms process millions of queries per second, those waits add up fast. They translate directly into slower responses, higher costs, and servers that spend much of their time simply waiting.

Previous attempts to close this gap had limited success. NVDIMM-N modules combined DRAM with NAND flash backup but offered latencies around 100 nanoseconds and modest capacities. Various forms of phase-change memory and resistive RAM had shown promise in labs but hadn't made it to commercial products that data center operators could actually buy and deploy. The industry needed something that could be manufactured at scale, plugged into existing server infrastructure, and deliver performance that meaningfully changed how applications could be designed.

What Intel and Micron proposed with 3D XPoint was exactly that kind of ambitious bet. Not an incremental improvement to existing memory, but an entirely new category sitting between the two dominant tiers.

The architecture of 3D XPoint was genuinely novel. Unlike NAND flash, which stores data by trapping electrical charges in floating gates using transistors, 3D XPoint used a transistor-less cross-point structure. Data was stored at the intersections of perpendicular word lines and bit lines in a three-dimensional checkerboard pattern.

Each memory cell used chalcogenide materials, specifically germanium-antimony-tellurium (GeSbTe) alloys, that could switch between two stable resistance states. Applying precise electrical pulses would heat the material to around 350°C to crystallize it (representing one data state) or above 600°C for rapid quenching (representing the other). This bulk resistance change, combined with an Ovonic Threshold Switch that replaced the traditional transistor, allowed each cell to be individually addressed.

The results were impressive. First-generation Optane SSDs achieved read latencies under 10 microseconds with throughput reaching 2 GB/s. The DC P4800X, Intel's flagship data center drive, could deliver 95,000 IOPS at just 9 microsecond latency. For comparison, even the fastest NAND SSDs of that era needed 50 to 100 microseconds for similar operations.

"Intel Optane SSDs for the data center lets users do more work with the same server with an outcome of lower TCO costs and greater expanding capabilities."

- ScyllaDB Performance Analysis

The persistent memory modules went even further. Optane DC Persistent Memory DIMMs, which plugged directly into standard memory slots, offered capacities up to 512 GB per module with nanosecond-scale latencies only two to three times higher than DRAM. They supported two operating modes: Memory Mode, which used DRAM as a cache to transparently expand memory capacity, and App Direct Mode, which exposed the persistent nature of the media for applications designed to exploit it.

Intel rolled out Optane across three distinct product tiers, each targeting a different slice of the market. The strategy was ambitious: own the entire gap between DRAM and NAND.

At the enterprise level, Optane DC SSDs like the P4800X and later the P5800X targeted data centers running latency-sensitive workloads. Database operators and in-memory analytics platforms could use these drives as ultra-fast storage tiers, keeping hot data accessible at speeds that NAND simply couldn't match. ScyllaDB's testing showed the drives were extremely responsive under any load, with low latency that helped server CPUs stay better utilized instead of stalling on I/O waits.

The Optane Persistent Memory DIMMs represented the most revolutionary tier. By plugging directly into DDR memory slots, they allowed servers to have terabytes of addressable memory per socket at costs far below equivalent DRAM capacity. Software frameworks like Intel's Persistent Memory Development Kit (PMDK) enabled developers to build crash-consistent data structures that could survive power failures without filesystem overhead.

On the consumer side, Intel offered small M.2 modules in 16 GB and 32 GB capacities, designed as intelligent caching layers for systems still using traditional hard drives. These could boost HDD data access speeds by up to 1,400% according to Intel's own benchmarks. Even today, you can find these 16 GB modules on eBay for about $7, still serving as cheap cache upgrades for legacy systems.

Here's where the story turns cautionary. Despite delivering everything it promised technically, Optane ran headlong into a brutal economic reality that no amount of engineering could solve.

The core problem was what industry analysts call the production-cost trap. Any new memory technology enters the market with low production volumes and minimal ecosystem support. Low volume means high per-unit costs. High prices suppress demand. And suppressed demand prevents the scale-up needed to bring costs down. It's a vicious cycle, and Optane got trapped in it.

The production-cost trap is brutally simple: low volume means high prices, which suppresses demand, which prevents the scale needed to lower prices. Optane's technical brilliance couldn't break this cycle.

The partnership between Intel and Micron, which had jointly developed 3D XPoint, began fracturing under these pressures. Micron exited the relationship in 2018 and sold its 3D XPoint fabrication facility in Lehi, Utah to Texas Instruments for $900 million in 2021. That left Intel bearing the full manufacturing burden alone, and yield challenges pushed unit costs even higher.

Intel's Non-Volatile Memory Solutions Group was bleeding money. Operating losses exceeded $500 million annually in its final years. The pricing sat in an uncomfortable middle ground, less expensive than DRAM but significantly more costly than NAND flash. Many potential customers found they could solve their performance problems with larger DRAM pools or faster NVMe SSDs at lower total cost.

In July 2022, Intel announced the wind-down of the Optane division, effectively ending 3D XPoint development. The technology that was supposed to create a new memory tier instead became a cautionary tale about the distance between technical excellence and commercial viability.

The problem Optane tried to solve hasn't gone away. If anything, the explosion of AI workloads and real-time analytics has made the memory-storage gap more painful than ever. Several competing approaches are now vying to address it.

The most prominent successor is Compute Express Link (CXL), an open interconnect standard that allows memory to be shared and pooled across servers. Where Optane required Intel-specific hardware and a tightly coupled architecture, CXL offers a vendor-neutral approach. As one research paper noted, "with the sunset of Intel Optane comes the rise of CXL for persistent memory." But CXL introduces its own complexity. Its disaggregated architecture expands the fault model, making crash consistency harder to guarantee than in Optane's simpler single-host design.

"With the sunset of Intel Optane comes the rise of Compute Express Link (CXL) for persistent memory."

- Researchers, "Rethinking PM Crash Consistency in the CXL Era" (arXiv, 2025)

Samsung is taking a different angle entirely. The company's revived Z-NAND technology targets a 15x performance increase over traditional NAND, paired with GPU-Initiated Direct Storage (GIDS) that bypasses the CPU and DRAM entirely. For AI data centers, where GPUs need massive amounts of fast-accessible data, this could fill part of the niche that Optane once targeted.

Meanwhile, the embedded memory market is taking a more cautious approach. Companies like Everspin, Weebit Nano, and Samsung are deploying STT-MRAM and ReRAM in microcontrollers and system-on-chip designs first, building manufacturing maturity in lower-stakes applications before targeting enterprise. It's arguably the smarter path, avoiding the production-cost trap by starting small.

As of 2025, the persistent memory market continues to expand with these alternatives, including CXL-enabled systems and advanced STT-MRAM and ReRAM technologies. The gap between DRAM and NAND remains, but the approach to filling it has fundamentally shifted from one company's proprietary bet to an ecosystem of open standards and diverse technologies.

Intel's Optane story is more than a hardware footnote. It's a case study in something that happens more often than technologists like to admit: a genuinely superior technology losing to market realities.

The lesson isn't that Optane was bad. It was remarkable. The sub-10 microsecond latencies, the terabytes of persistent addressable memory, the ability to treat memory as both fast and durable were real achievements that changed how engineers think about memory hierarchies. The software concepts pioneered for Optane, from PMDK libraries to Linux DAX mode, continue to influence how persistent memory is programmed regardless of the underlying hardware.

But technical excellence alone doesn't build markets. You also need manufacturing scale, a willing ecosystem of partners, competitive pricing against "good enough" alternatives, and timing that matches what customers actually need right now, not what they'll need in five years.

Within the next decade, you'll likely interact with systems that finally deliver on Optane's original promise, fast, persistent, byte-addressable memory that blurs the line between RAM and storage. The technology will probably come through CXL, or Samsung's next-generation flash, or some combination we haven't imagined yet. And the engineers who build those systems will owe a debt to the audacious, expensive, ultimately doomed experiment that showed everyone what was possible.

Optane bridged the DRAM-NAND gap. It just couldn't bridge the market gap. And in technology, sometimes that's the harder problem to solve.

Saturn's moon Titan may harbour liquid water beneath its frozen crust, kept from freezing by ammonia acting as a natural antifreeze. New Cassini data suggests the interior could be slush with warm water pockets rather than a global ocean, and NASA's Dragonfly mission launching in 2028 aims to investigate whether this exotic environment could support life.

The cerebellum, long dismissed as merely a motor coordinator, forms dense circuits with the prefrontal cortex that shape cognition and emotion. Disruption of these pathways is now linked to schizophrenia, autism, and ADHD, opening new frontiers in diagnosis and non-invasive brain stimulation therapies.

Research shows the sharing economy often increases total resource consumption through the Jevons paradox and rebound effects. Ride-sharing adds billions of vehicle miles, co-working spaces use more energy per worker, and diffused responsibility erodes conservation behavior. Breaking the paradox requires congestion pricing, accountability design, and matching sharing models to appropriate resource types.

Illusory superiority causes most people to rate themselves above average in driving, intelligence, and ethics. This bias is rooted in metacognitive blind spots, shaped by culture, and carries real costs in healthcare, finance, and leadership. Structured feedback and institutional safeguards can help, but require ongoing effort.

Eastern skunk cabbage generates its own body heat through the alternative oxidase pathway, maintaining temperatures up to 35°C above freezing air and melting surrounding snow. This thermogenic ability, shared by roughly 90 plant species worldwide, reveals a level of metabolic sophistication that challenges assumptions about plant passivity.

America has 28 vacant homes for every homeless person, yet homelessness hit record highs in 2024. Speculative investment, geographic mismatches, and political barriers explain the paradox, while Finland and Vienna show that Housing First and social housing models can work when the political will exists.

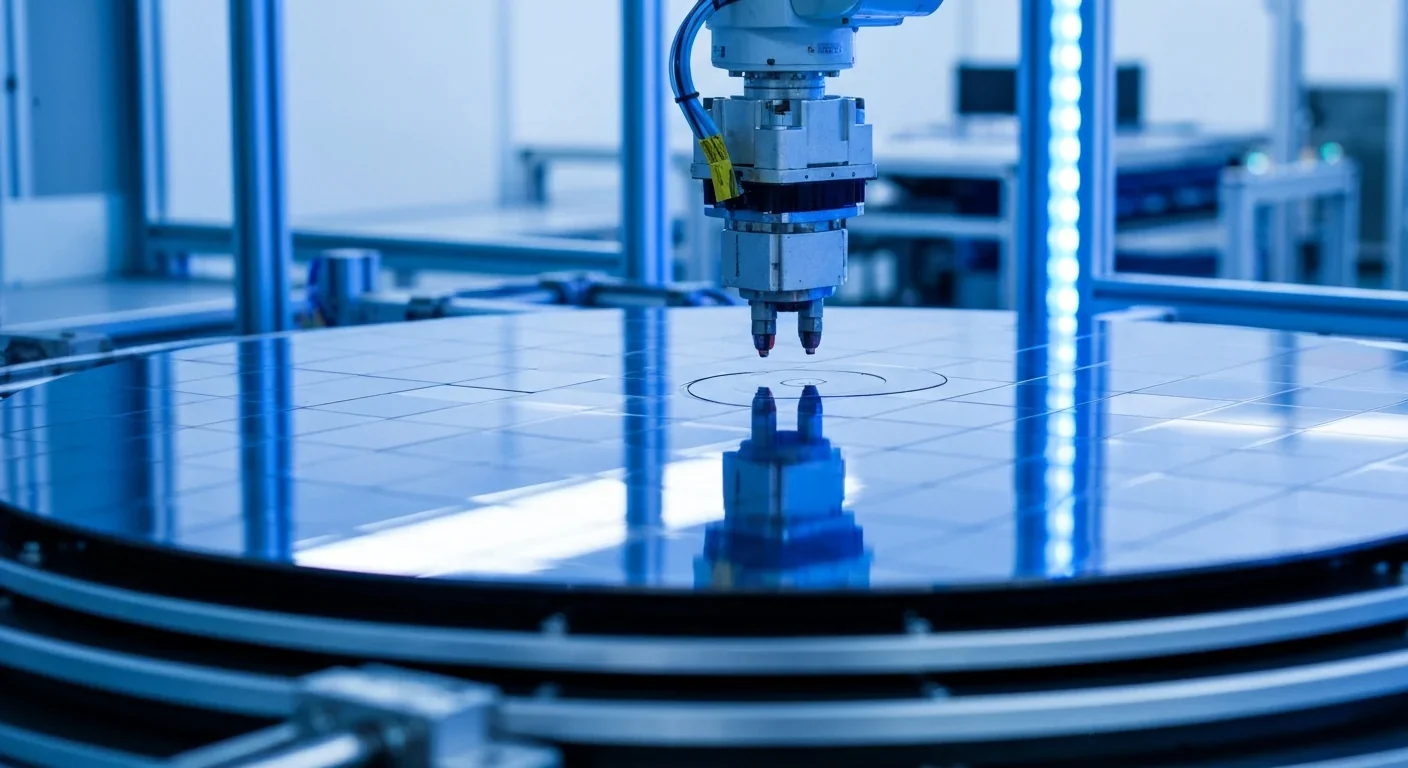

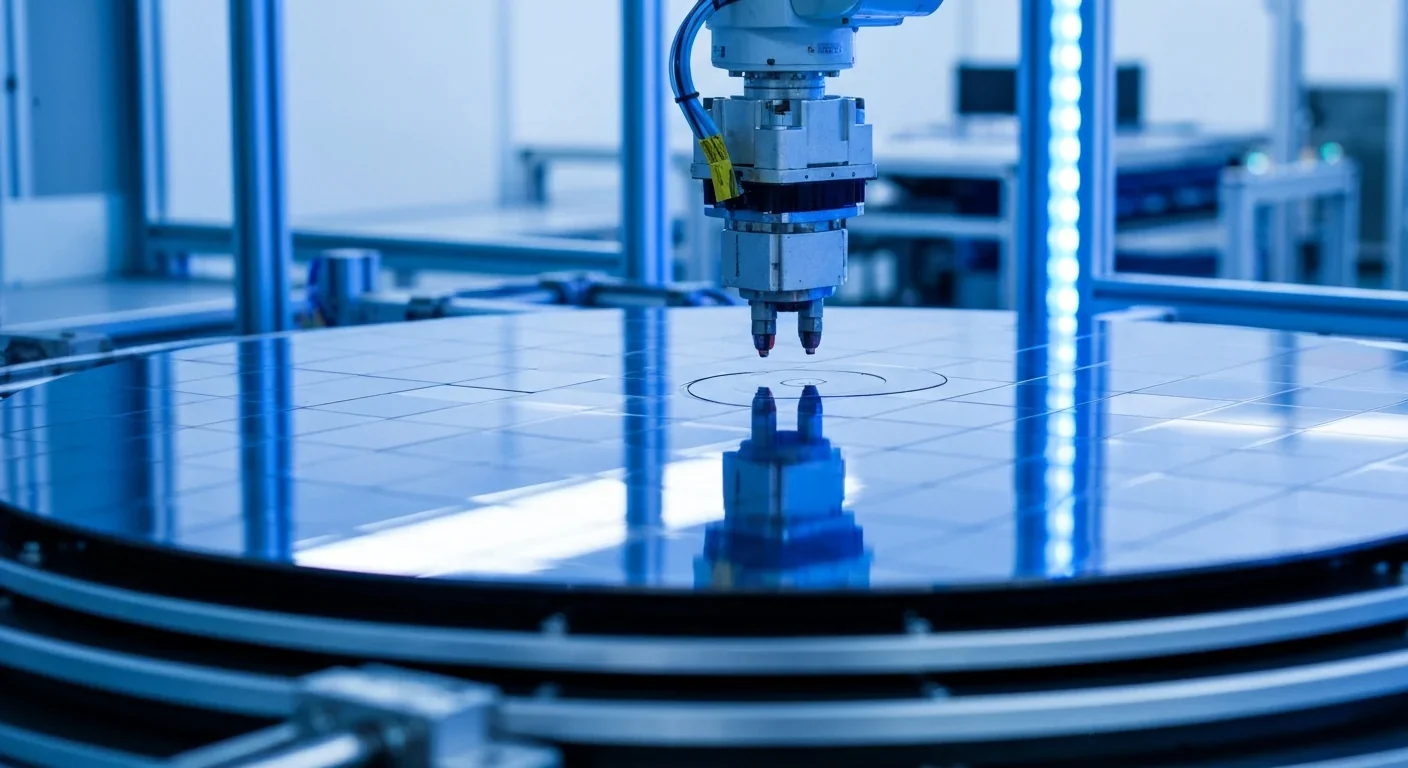

Wafer-on-wafer bonding fuses logic and memory silicon at the atomic level, delivering up to 100x interconnect density over traditional packaging. TSMC, Intel, and Samsung are racing to commercialize the technology as AI chips hit the memory bandwidth wall.